Multimodal Target Tracking with Heterogeneous Sensor Networks

Introduction

Recent advances in information, micro-scale, and embedded vision technologies are enabling the vision of ambient intelligence and smart environments. Environments will react in an attentive, adaptive and active way to the presence and activities of humans and other objects in order to provide intelligent/smart services. Heterogeneous sensor networks (HSN) with embedded vision, acoustic, and possibly other sensor modalities is a pivotal technology for the realization of these systems.

HSNs with multiple sensing modalities are gaining popularity in different fields because they can support multiple applications that may require diverse resources. Multiple sensing modalities provide flexibility and robustness. However, different sensors may have different resource requirements in terms of processing, memory, or bandwidth (e.g., microphones vs. cameras). To furnish the available resources, an HSN may combine nodes with various capabilities for supporting several sensing tasks.

We have developed an HSN consisting of audio and video sensors for multitarget tracking in an urban environment. If a moving target emits sound then both audio and video sensors can be utilized. These modalities can complement each other in the presence of high background noise that impairs the audio or visual clutter affecting the video. Audio-video tracking can also provide cues for the other modality for actuation.

The approach we have taken for target tracking and inference in urban environments utilizes an HSN of mote class devices equipped with acoustic sensor boards and embedded PCs equipped with web cameras. The different components involved in the system perform audio processing, video processing, audio-video sensor fusion, multiple target tracking, and inference of target activities.

The main problem in multiple-target tracking is to find tracks from the noisy observations; this requires solutions to both data association and state estimation problems. Our system employs a Markov Chain Monte Carlo Data Association (MCMCDA) algorithm for tracking vehicles emitting engine noise. The MCMCDA algorithm is a data-oriented, combinatorial optimization approach to the data association problem that avoid the enumeration of tracks.

Architecture

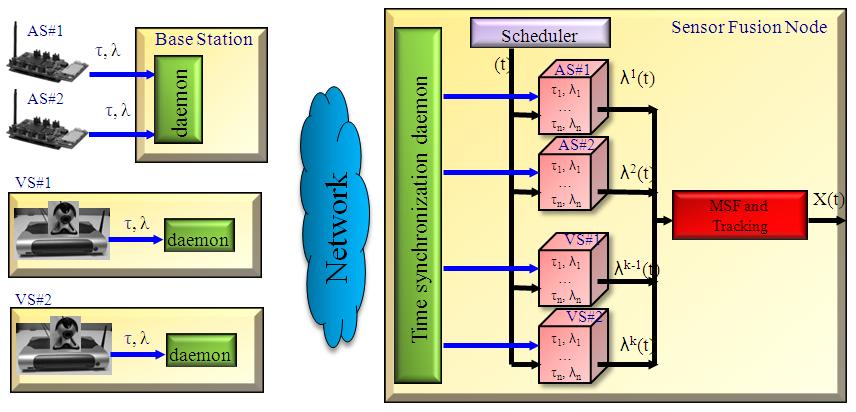

The architecture of the system is shown below

The audio sensors, consisting of MICAz motes with acoustic sensor boards equipped with 4-microphone array, form an 802.15.4 network. This network does not need to be connected; it can consist of multiple connected components as long as each of these have a dedicated mote-PC gateway. The video sensors are based on Logitech QuickCam Pro 4000 cameras attached to OpenBrick-E Linux embedded PCs. These video sensors, the mote-PC gateways, the sensor fusion node are all PCs forming a peer-to-peer 802.11b wireless network.

Deployment & Evaluation

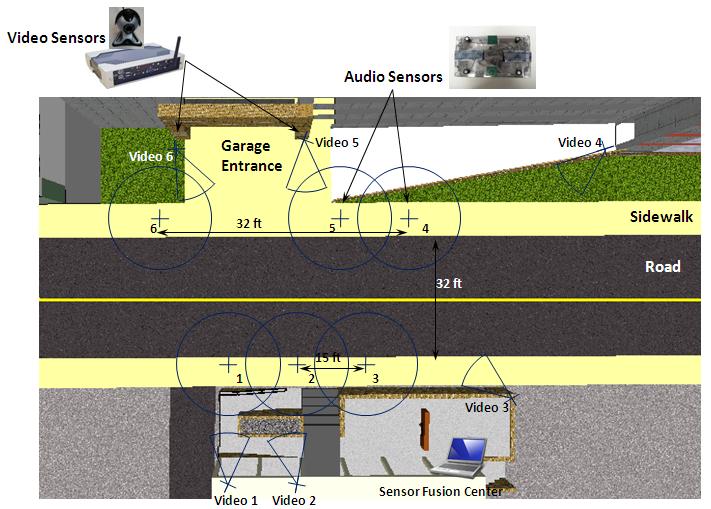

The deployment of the multi-modal target tracking system is shown in figure below. We employ 6 audio sensors and 6 video sensors deployed on either side of a road. The sensing region covers a 20x20 meters area including a 20m section of the road and a garage entrance.

The complex urban street environment presents many challenges including gradual change of illumination, sunlight reflections from windows, glints due to cars, high visual clutter due to swaying trees, high background acoustic noise due to construction and acoustic multipath effects.

The full video of the demostration of the target tracking system can be downloaded from here.

Book Chapter from the Handbook of Ambient Intelligence and Smart Environments can be downloaded from here (AISE 2009)

Conference papers can be downloaded from here (MFI 2008) and here (ICCCN 2008).

This project is part of the MURI project. Information and a technical overview of the project can be found at http://www.truststc.org/hsn/.

Visit the NEST page for information on our other projects.

Resources

To watch and listen to the HSN MURI review meeting, you will click here (password required)