Assurance-based Learning-enabled Cyber-Physical Systems (ALC)

Autonomous vehicles (cars, drones, underwater vehicles, etc.) started using software components built using machine learning techniques. This is due to the requirement that these vehicles must operate in highly uncertain environments and that it is very hard to design a correct algorithm for all possible situations. Instead, we collect data from a real or simulated environment and train, using machine learning algorithm, a general-purpose component - typically a neural net - to perform a certain function. Such components are called Learning-Enabled Components (LECs). However, the challenge is that the training data cannot cover all possible cases, yet we need to know that the system works safely and has acceptable performance. This project is doing fundamental research and building tools for supporting the engineering of such systems. The tools are for modeling the system (e.g., an underwater vehicle), executing the training and testing of the learning-based components, and building formal arguments (called assurance cases) to show that the system is safe.

Autonomous vehicles (cars, drones, underwater vehicles, etc.) started using software components built using machine learning techniques. This is due to the requirement that these vehicles must operate in highly uncertain environments and that it is very hard to design a correct algorithm for all possible situations. Instead, we collect data from a real or simulated environment and train, using machine learning algorithm, a general-purpose component - typically a neural net - to perform a certain function. Such components are called Learning-Enabled Components (LECs). However, the challenge is that the training data cannot cover all possible cases, yet we need to know that the system works safely and has acceptable performance. This project is doing fundamental research and building tools for supporting the engineering of such systems. The tools are for modeling the system (e.g., an underwater vehicle), executing the training and testing of the learning-based components, and building formal arguments (called assurance cases) to show that the system is safe.

Web: https://alc.isis.vanderbilt.edu/redmine/projects/alc-project-public/documents

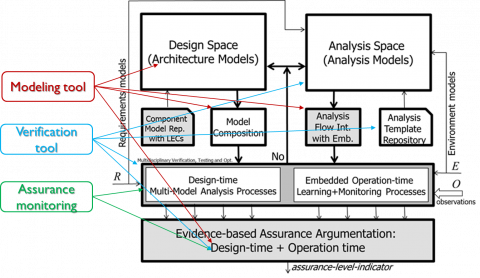

The project follows a three-pronged approach. One effort looks into applying formal verification techniques, namely reachability and robustness analysis via model checking to trained neural network. These methods can show that the if the input to the neural net remains in a bounded subspace then the output remains in a bounded subspace as well. In addition, they can validate the robustness of the (classifier) network with respect to additive disturbance on the inputs; i.e., if the disturbance is bounded, then the output will never provide an erroneous classification. A second effort develops algorithms for detecting if a trained neural network receives an input that is not in the same probability distribution that the training data was drawn from. These algorithms are called ‘assurance monitors’ and are largely based on the principles of Inductive Conformal Prediction, and can calculate a quantitative ‘assurance measure’ that indicates to what degree the output of the neural network can be trusted. The third effort integrates the techniques described above into a comprehensive software and systems engineering toolchain for the design, construction, integration, and testing of cyber-physical systems with LECs. The toolchain provides end-to-end support for the construction: training, testing, and verification of LECs, as well as for system-level activities, like assurance argument construction.

The measures of success are related to the functionality and the performance of the algorithms for verification and assurance monitoring, as well as to the functionality and the usability of the toolchain. The verification algorithms shall be scalable to non-trivial neural networks, like the VGG-16 model, and shall complete the model checking tasks within acceptable time constraints (e.g. less than 60 mins in design time and under the time constraint imposed by the system dynamics at run-time). The assurance monitor techniques shall have a very low False Negative ratio (indicative of not reporting a problem with the underlying neural network) and a low False Positive ratio (indicative of reporting a problem whed there is none). The toolchain is successful if (1) it provides unique and novel functions for autonomy software stack developers, and (2) it is usable by skilled engineers who construct such software systems.

The project produces three technologies and one demonstrative example. The first technology is related to the formal and algorithmic verification of the neural networks: their safety and robustness properties. The second technology is related to the assurance monitoring of the neural networks and calculating the assurance measures. Both of these technologies are implemented in the form of algorithms (i.e., prototype executable code) that can be applied both at design-time and at run-time. The third technology is manifested in an integrated toolchain that includes a model-based and a code-based development environment, and provides a high degree of design automation for the engineering of CPS with LECs. The toolchain includes not only modeling tools and code development tools, but provides dedicated domain specific languages for automating the tasks of neural network training and evaluation. The demonstrative example includes a small mobile robot, similar in kinematics to autonomous underwater vehicles (AUVs), equipped with sensors like sonars and Lidars, as well as a complete software stack for the autonomous operation of the robot. The robot’s mission software provides services analogous to services found in AUVs, including waypoint navigation, infrastructure (e.g. pipeline) following, obstacle avoidance, etc., all executed autonomously. The software stack includes several LECs and serves as an example for an CPS with assured autonomy functions.